You are part of a development team and you want to try out the latest version of the VM that your application uses. Or you want to make some configuration changes and test them out in your development environment. However, you are not sure if your changes would be useful or successful. And hence you want an ability to roll back the changes or potentially version control them. How do you do it? Vagrant comes to the rescue!!

What is Vagrant?

Vagrant is an open source tool for building and maintaining portable development environments. With the rise of complex architectures involving several different servers and technology stacks, Vagrant simplifies the task of creating and maintaining the required stack of software/libraries etc.

Vagrant stores the configuration in the form of a text file(s) and these files could be put under your favorite version control system such as git. If changes don’t work out, you can easily roll back and go to the earlier working stage. This capability improves the development productivity a lot and hence Vagrant has become a darling of several development teams.

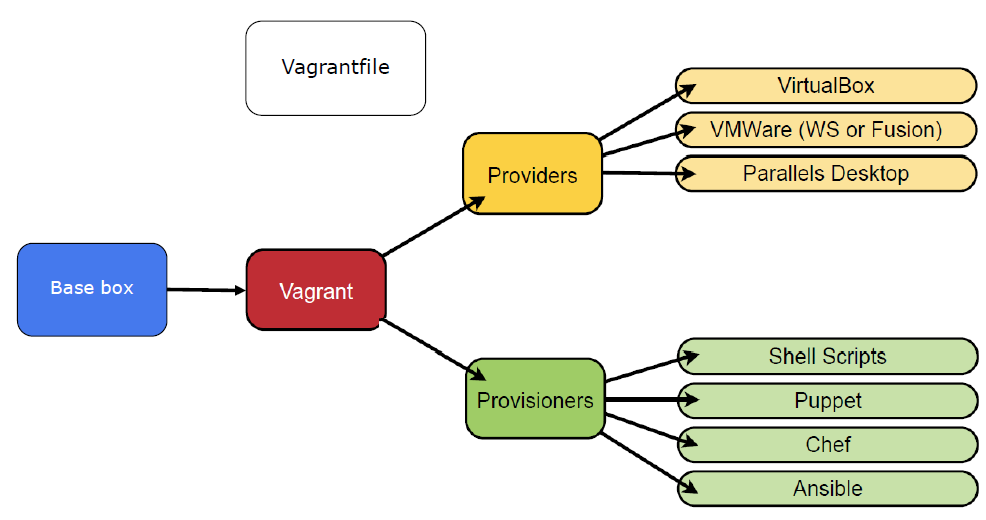

This tool uses a concept of “provisioners” and “providers”. Provisioners are tools that allow to customize/modify the environments – examples are Chef and/or Puppet. Whereas Providers are services which provide virtual machines such as AWS, Docker, VMWare etc.

One might be tempted to compare Vagrant with other configuration management tools such as Chef/Puppet/Ansible. However, Vagrant is commonly used along with one of these tools as they serve different purposes.

Related Links

Related Keywords

Configuration Mangement, Docker, Chef, Puppet, Ansible, VMWare, AWS, DevOps

![Type-1 and type-2 hypervisor - By Scsami (Own work) [CC0], via Wikimedia Commons](https://upload.wikimedia.org/wikipedia/commons/e/e1/Hyperviseur.png)